AI and the law: Artificial intelligence on trial.

Whether they are found on the factory floor, in autonomous vehicles or the operating theater, more and systems with artificial intelligence are being used in the work environment. But who is legally responsible if the technology gets it wrong?

- The increasing use of artificial intelligence raises not just ethical questions but legal issues as well.

- Legislation is lagging behind this development all over the world.

- EU Commission and expert panels are working on strategies to regulate AI systems.

Anyone relying completely on the safety of autonomous vehicles needs to reckon on hurtling towards a disaster. This is what happened in the case of the self-driving Volvo from the fleet of ride-sharing service Uber, which knocked down a woman crossing the road in the US state of Arizona in 2018.

Just under a year later, the public prosecutor responsible for the case ruled that it did not hold Uber to be criminally responsible. Initially, experts assumed that there had been a software error. But then it emerged that the safety driver had allegedly been looking at videos on her smartphone during the drive.

Man or machine: who’s in the right?

A similar case was the first fatal accident with an autonomously driving Tesla. Even the best technology does not absolve the driver of the responsibility to keep their eyes on the traffic – for the time being at least. Autonomous driving is still a comfort feature designed to make long drives more pleasant. But in future, artificial intelligence (AI) will be alone at the steering wheel, while passengers work or – as envisaged by the business model – take advantage of the paid entertainment options on board. The question of who is liable in the event of accidents is the first major legal debate of the AI era. Until now, holding a car manufacturer liable in the event of an accident has tended to be the exception.

Legislative proposals on the way.

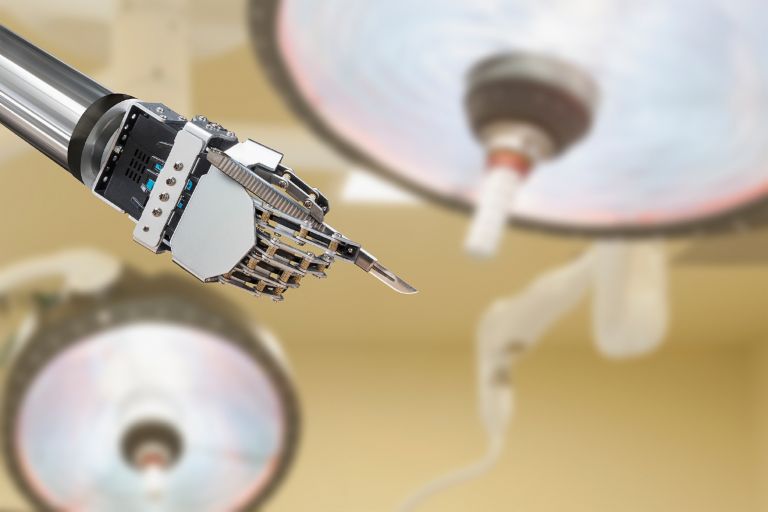

However, cars are just a particularly visible application. Artificial intelligence has been making important decisions in many areas for quite some time now. Image recognition systems mark passers-by as suspects, HR systems filter job applicants and robots assist doctors. So what happens if these machines make mistakes? Is the human being, i.e. the programmer, liable? And who can judge whether the algorithm was at fault or the data it was fed? “At present there is no country in the world with a legal system that makes specific provision for the peculiarities of AI systems and smart robotics,” says legal expert Martin Ebers, President of the professional body Robotics and AI Law Society (RAILS). In 2018 the EU Commission adopted an AI Strategy and established an expert panel to look into a reform of the product liability directives among other things.

Artificial intelligence no guarantee against human error.

Until now there have been only a few legal actions due to AI errors. A lawsuit concerning an accident at the VW plant in Baunatal in Germany, in which a factory robot fatally injured a 21-year-old worker, attracted a lot of attention. The proceedings were abandoned because the victim had apparently incorrectly set the robot himself. The outcome of the first such legal action, in which an investor from Hong Kong is filing a suit because an AI-powered trading system caused him to lose his money, is still pending. The supercomputer K1 was supposed to manage a USD 2.5 billion portfolio, but on Valentine’s Day 2018 alone it burned through USD 20 million.

The investor is now suing the service provider Tyndaris for USD 23 million in damages because the firm is alleged to have exaggerated the capabilities of the AI. Tyndaris in turn is suing the claimant for outstanding fees amounting to USD 3 million and disputes the claims, saying that the company had never given any guarantees about making a profit.

Photo credits: EyeEm / Getty Images, Cultura / Getty Images, Photodisc / Getty Images